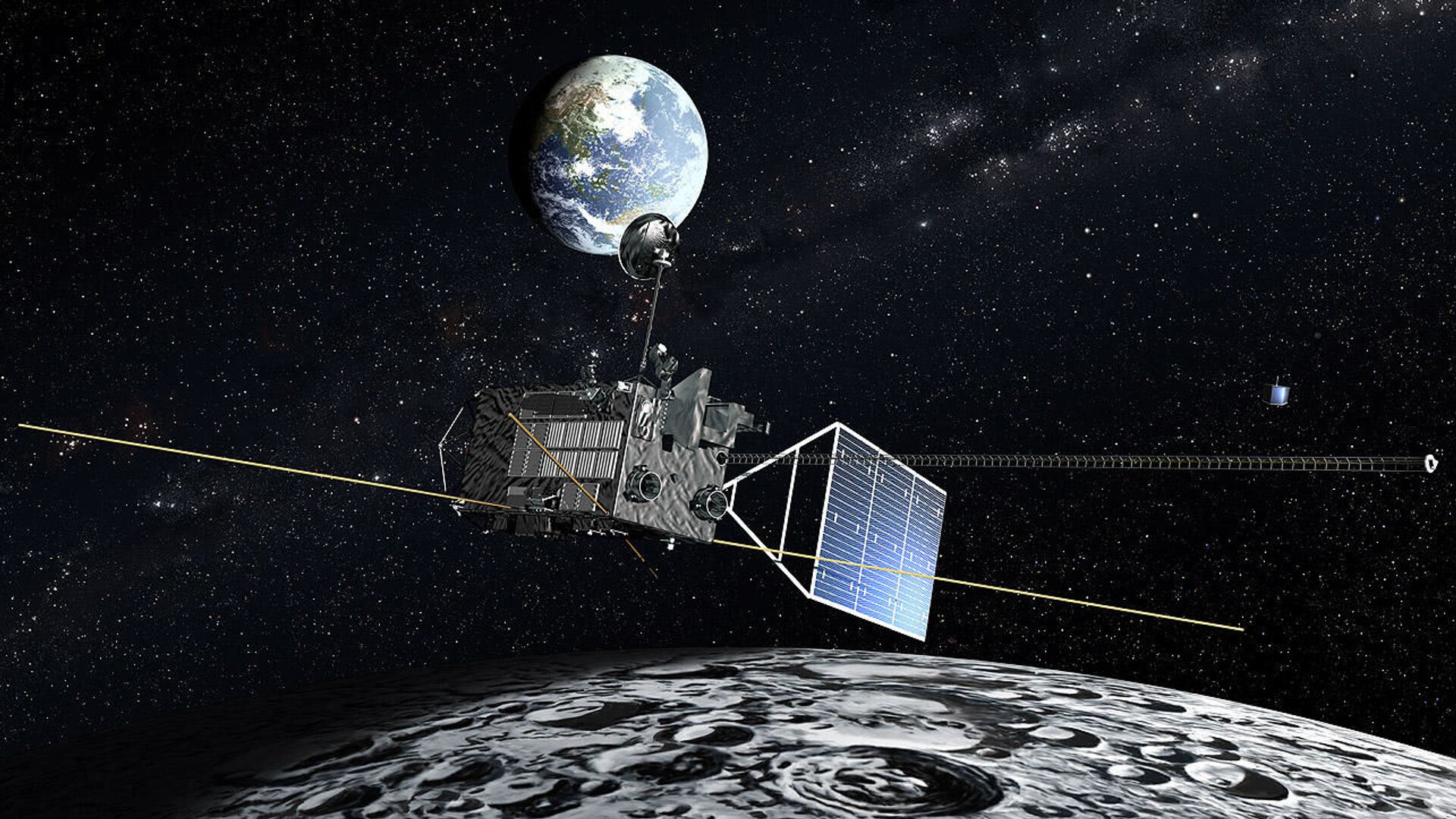

الصين تطلق مشروعا يمكن مستخدمي الجوالات من مراقبة الأرض عبر كاميرات دقيقة في الفضاء - 18.03.2022, سبوتنيك عربي

كاميرات مراقبة منزلية للحيوانات الاليفة مع تطبيق للجوال من سمارت كليك، فيديو HD 1080p، رؤية ليلية، صوت ثنائي الاتجاه، كشف الحركة، كاميرا صغيرة واي فاي لمراقبة كبار السن والاطفال : Amazon.ae: الإلكترونيات

ساعة ذكية للرجال بسن ازرق رياضي، اتصال/استقبال المكالمات، اجهزة للجوال، جهاز تتبع اللياقة البدنية مع مراقبة النوم والموارد البشرية، تذكير، تصنيف IP68 : Amazon.ae: الإلكترونيات والصور

السعودية: التحذير من تداول قرارات كاذبة يتم نسبها لوزارة الداخلية بالمملكة حول مراقبة الجوالات وحسابات التواصل بـ نظام الاتصالات الجديد

كاميرا لاسلكية عالية الدقة 1080 بكسل، كاميرات مراقبة الأمن المنزلية مع تطبيق للجوال، كاميرا المربية تعمل بالحركة، تنبيهات الكاميرات للكشف عن الحركة الداخلية المنزلية، من فيوليسيتي، أسود: اشتري اون لاين بأفضل الاسعار

كاميرا مراقبة لاسلكية 5.5 ملم بدقة 1920P HD بتقنية WiFi بتصنيف IP67 لمقاومة المياه والغبار مع 6 مصابيح LED لجوال ايفون وIOS وAndroid من ديندوكام (2 متر) : Amazon.ae: الإلكترونيات والصور

كاميرا مراقبة لاسلكية اتش دي من هوب مع تسجيل سحابي مجاني لمدة 5 ايام وتطبيق ذكي للجوال ورؤية ليلية وكشف الحركة والصوت، صوت ثنائي الاتجاه، تعمل مع اليكسا وجوجل هوم (رمادي) :