مركز الخدمات الزراعية - Agricultural Service Center - طارد افاعي SNAKE AWAY SNAKE REPELLENT AMERICAN FORMULA ◁ ◁ السعر : 40 شيكل ▷ ▷ للاستعمال خارج المنزل فقط | Facebook

طارد الثعبان من بوفادو للحيوانات الاليفة في الهواء الطلق، طارد للثعبان للفناء، كرات طارد للثعبان بعيدا عن الخارج والفناء والمنزل، فعال ومتين، آمن للحيوانات الاليفة والبشر - 8 عبوات : Amazon.ae: الصحة

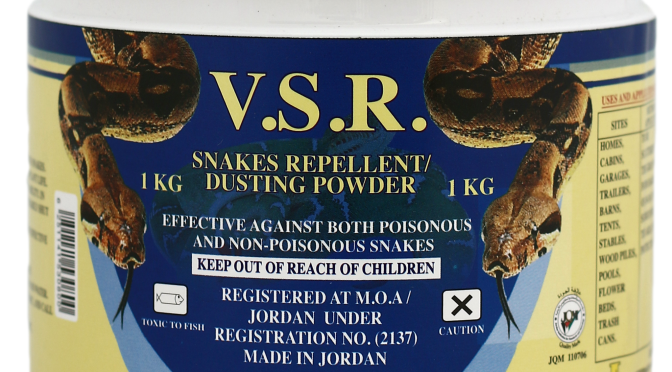

الاعلامية ابتهال افطيمة - ما يسمى "طارد الأفاعي" الذي يتم الترويج له على مواقع التواصل غير آمن وغير مسجل في وزارة الصحة، ولا تعرف تركيبته أو آثاره على البشر أو الحيوانات الأليفة

تي اس سي تي بي اي طارد ثعابين قوي للفناء، طارد الثعابين للاستخدام في الهواء الطلق، امن للحيوانات الاليفة، طارد الثعابين للفناء، طارد الثعابين للفناء، طارد للثعابين في الهواء الطلق والمنزل، بشكل

طارد ثعابين قوي للفناء من ويموالوس، طارد للثعابين في الهواء الطلق، امن للحيوانات الاليفة، طارد للثعابين في الهواء الطلق، 8 عبوات طارد الأفعى الجرسية للمنزل والفناء والحديقة : Amazon.ae: الفناء والحديقة والبستان

/product/53/975236/2.jpg?1306)